Agreed - the 68000 did not really sample until February 1980, with volume not available until November 1980.

However, it is one of those crossroads in computing history - where the temptation is to ask “what if?”.

Agreed - the 68000 did not really sample until February 1980, with volume not available until November 1980.

However, it is one of those crossroads in computing history - where the temptation is to ask “what if?”.

While this is mostly true, you can still invest into hardening support logic to use a promising technology, which isn’t ready for production yet. E.g., the Alto used RAM chips, when these were still notoriously failing and introduced a quite extensive low-level error correction layer in hardware to cope with this. Regarding density, mind that the Alto was the “interim Dynabook” – an excessive form factor may allow for some liberty.

I think the crucial observation about the interim Dynabook was to throw money at the problem: this was never to be a commercial proposition with a business case behind it. A research machine, not a product. And so the usual constraints of economics don’t apply.

The BBC Micro was in some small way similar: the design used bleeding-edge DRAM chips, available only from one supplier, and not cheap. The expectation was to make mostly the 16k model, not the 32k model, and to hit volumes of 10,000. When, in the event, the BBC Micro was much more popular than that, and the 32k model very much the only one worth making, the price and availability of those bleeding edge chips presumably relaxed a bit.

Which is to say, in the presence of continual exponential improvement, it pays to try to design for the situation you’ll have at the time of sale, not at the time of design. If only you can hold your nerve, and predict the future well enough.

(The IBM PC also misunderestimated the expected sales volumes dramatically: as a consequence, the product was priced higher than it would have been, and so ended up more profitable than it would have been.)

In the early days it was very much over-engineered - after all it was designed and built by a mainframe manufacturer.

I remember the thickness of the steel in those early chassis and the hard-drive suspended on rubber bungee mounts.

My employer started buying them in about 1986 for about £3000 each, when you could buy a house for £60,000

Specifically, according to the 68000 design team, the 68008 was not ready, and IBM wanted the cost reduction of an 8-bit external data bus.

This interview with some members of the 68000 team covers that (as well as some other fascinating bits of history, including discussions of working with Sun and Apollo on their workstations, the relation (or lack thereof) between the 68000 and 6809, etc.):

This would have made some sense, since the 68000 was big-endian, like most of the bigger IBM hardware. The 68000 may have been just ready for market introduction, but reportedly Motorola couldn’t provide 5,000 pre-production samples required for IBM’s internal evaluation process. (At least, this is what I’ve read. I’m not so sure about the quite excessive number of samples. This may be off a magnitude or two.)

Regarding management not being so sure about the PC: Mind that IBM was struggling all over the 1970s over a PC design (even before the trinity of 1977). There were several concepts, like “Yellow Bird”, a very promising prototype “Aquarius” based on bubble memory modules, which even made it to pre-production prototype stage (including a complete marketing concept), and out-sourced design studies (e.g., there’s one by Eliot Noyes Associates based on unknown hardware). After the dismissal of “Aquarius” (apparently for fading confidence in bubble memory) upper management apparently just gave up. At some point, just before Project “Chess”, which became the IBM PC, IBM even considered buying Atari and basing their PC on the Atari 800. (At least, there’s a design study for this.)

Some of this (including images) can be found in “Delete.” by Paul Atkinson.

IBM “Yellow Bird” mockup (Tom Hardy, 1976; image: “Delete.”):

Envisioning home computing with “Yellow Bird” in 1976 (image: “Delete.”)

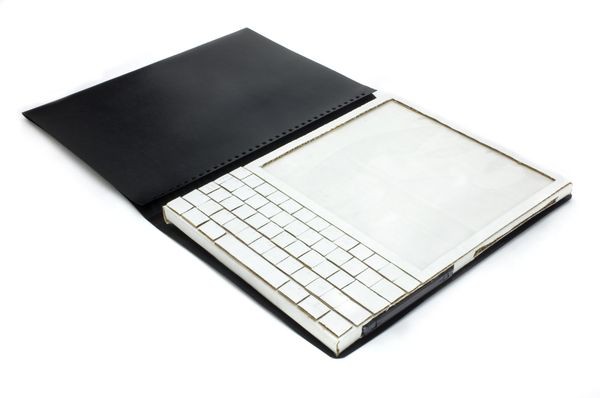

IBM Aquarius (Tom Hardy, 1977; image: “Delete.”):

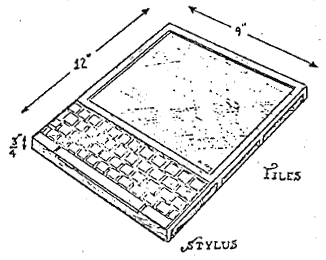

IBM “Atari PC” design study (Tom Hardy, 1979; image: “Delete.”):

Here’s a sketch for the Noyes Associates project:

“The prototypes created for IBM were a bit more interesting than their 1981 PC – this 1977 project was envisioned in three versions: beige, deep red, and teak. Teak? Yep – a real wood cabinet.”

It seems, contrary to common belief, IBM didn’t “miss out on the home computer”, they just tried too hard…

It was sort of available at the end of 1980 in that you could get 4MHz versions (like the Lisa used) but had to wait a while longer before the 8MHz chips could be had.

IBM did launch a 68000 based computer about 10 months after the PC. But it was 2 to 3 times more expensive than the PC.

Regarding IBMs journey to the PC: It’s quite interesting to see IBM shift from home computing to the office (as first noticeable in the Noyes Associates study and the “IBM Atari”) in the prototypes and design studies above. However, the “PC”, the IBM 1550 wasn’t the disruptive product it is often considered nowadays. At 4.77 MHz and with a 8-bit mother board, it was hardly faster than the home machines of the time. (With built-in BASIC in ROM and a cassette port, it wasn’t also that dissimilar from the home computer concept, less RF output.) The idea was more about merging the various text processing systems and the smart terminal, while crucial software, like databases, would still live on the mainframe, tying the PC into the IBM ecosystem. From this perspective, the 5150 didn’t extend much over the previous IBM 5120, but more cost effective and in a smaller form factor. It wasn’t before the i386 that PCs really became what is considered a PC today, a machine that was capable of shifting business applications from a shared mainframe to a standalone machine. And IBM apparently wasn’t too happy with this. (From this perspective, the original PC was just a short episode, before we got “real PCs” in form of the i386 machines.)

It really was more for the business customers, who were longing for standardization, in the jungle that was acquisition, and were looking at IBM for this, that the PC became this “iconic breakthrough”. (And, in the end, it was probably gaming that won the day for the PC.)

The 68000 workstation linked by @jecel is a different beast. As a lab computer it is perfectly able to “stand on its own feed”. However, from a business perspective, there’s quite a difference in a lab machine (where standalone is a requirement and cost isn’t that a crucial factor) and business computing (where you want to tie everything into your ecosystem and scale is a factor – also, “there’s a card for that”, the 1980s’ equivalent of the App Store).

Edit: This was probably also, where the Lisa and the Mac failed as “serious” business machines: as a front-end to mainframe application, they didn’t have much to offer in terms of the GUI, apart from slightly lesser integration, since it was still the text-based mainframe application. What remained, what they were really good at, was the production of individual documents of all sorts, but they still failed on the integration aspect. Which may have been just a bit too much of a personal computer. (Apparently, Jobs learned a lot from this, compare the NeXt, which was really huge on intergation.)

A fascinating oral history, and insight into Motorola’s internal operations - involving several of the key members of the 68000 “delivery team”.

How from out of the downturn of 1975, the Motorola microprocessor group delivered a new architecture, and brought it into volume production.

This involved radical internal changes of culture within Motorola and the building of at least one new MOS factory at the cost of some $800 million.

The engineers witnessed the chage from learning about punched cards on mainframes at college, to creating an environment where engineers had powerful workstations on every desk. Workstations that primarily used Motorola 68K family devices.

The video interviewees cover approximately the two decades at Motorola between 1975 and 1995, of which 1980 to 1990 was the decade of the 68000.

The interesting twist to this oral history is that the chairman of this meeting was ex-Intel, and there was a lot of camaraderie between once rival organisations. They agreed amonst themselves that Intel and Motorola just did things differently, two completely different approaches to solving the same basic problem.

At almost 3 hours long - it’s an excellent bit of viewing for a spare evening.

Related to the original question, I think, a crowdfunding campaign for a documentary:

https://www.kickstarter.com/projects/messagenotunderstood/message-not-understood/

(About looking at the past to understand the present: many classic photos in the enclosed video. And the line “lets make sure the computer doesn’t end up like the television” - which makes complete sense to me, but I can imagine it might be dated.)

via mastodon

“Message Not Understood” is by far the most common error message in Smalltalk.

There is a great video of a Lilith Emulator here and some photos

On Github there’s an emulator for SIMH running on the Interdata32 emulator but needs UNIX 7 installed.

Indeed, The “Atari PC” is real. When us old Atarians first heard about it, we wondered if it was just some fantastic story to get attention. It only came to light in the retro Atari community a few years ago. Though, what I heard about it then was that Atari would have been an OEM manufacturer for IBM, not purchased by them.

A very small group inside Atari was involved in creating the prototype for IBM, and even knew about it. When former Atari employees were asked about this a few years ago, almost none of them knew about this proposal. In any case, it fell through. The proposal happened before Atari released the 400/800 in 1979.

Speaking of such stories, Tandy Trower, a former Atari employee, said in 2015 that he worked with Microsoft in about 1978 to get its 6502 Basic, with a specific feature set Atari wanted for their computers, working inside of 8K of ROM, but they couldn’t get it to fit. So, Atari got their Basic from Shepardson Microsystems. The language design they used was inspired by Data General’s Business Basic.

In any case, Atari was so impressed with Microsoft that they offered to buy the company. Bill Gates turned them down. Smart decision, lol! ![]()

Indeed, there are only a few kinds of error messages in Smalltalk. I think this is an indicator of its flexibility.

I eventually learned that this message can be trapped by a method/handler: doesNotUnderstand: (often referred to as “DNU”).

I found a use for it in an experiment I tried, where I used a proxy class for contacting websites, using CGI. What was fascinating was that it was super easy to emulate CGI from within Smalltalk. The CGI form maps well onto Smalltalk’s messaging syntax. So, I contemplated that rather than using an API to contact websites, I could just treat websites like they were objects in the Smalltalk system.

The proxy class contained a few defined methods, but most of what I passed to it were messages it knew nothing about. So, they’d get dumped to the DNU handler, which would receive the message as an object. The handler could then unpack the message, and do with it what it wants.

DNU reflects how some of the earlier versions of Smalltalk worked normally: The way they implemented methods was each object parsed an input stream. With ST-78 and later, they decided on a keyword syntax for message passing, and made DNU a special case for custom message parsing.

Don’t skip the original KiddiComp/Dynabook concept! The Alto was meant to be an initial step toward a mobile slate device but the idea of an office PC and the new directon of Smalltalk pulled everyone’s attention away from that goal.

True, the technology needed to create a Dynabook was available by the late '70s, and Alan Kay tried pitching the idea to Xerox, but they weren’t interested in developing it.

The closest he got at Xerox was a project he started on in the mid-70s, called NoteTaker.

It ran Smalltalk-78 on 3 Intel processors, IIRC, which Alan called “barely sufficient.” An exciting thing they tried was using the NoteTaker on a commercial flight, and it worked out. I think they marked the occasion by saying it was the first object-oriented system used at 30,000 ft.

If it looks an awful lot like an Osborne 1, that’s because the case design for it was inspired by NoteTaker. Nothing else about it was, though.

There’s a brief moment in The Xerox Alto: A Personal Retrospective (from 2001) where Chuck Thacker said that what Alan Kay was after with the Dynabook was “this,” holding up a tablet. He mentioned that he had a version of Squeak (Smalltalk) on it.

It was a big improvement on the Compaq (3 8086s, as you said, instead of one 8088) and was running a GUI. Instead releasing this in 1979 Xerox did the Star and a desktop CP/M machine years later.

About Squeak on a tablet, though it was ported to iOS very early on Apple has never allowed it to be available (you can use it to write an app and release that as long as you prove there is no way for the users get to the underlying Smalltalk).

Bob Belleville at Parc proposed a small scale version of the Star, using Intel or Motorola processors, but at the time he proposed it, it would’ve been more expensive than the Star. That changed a couple years later, becoming cheaper. It also would’ve required rewriting everything they were doing for the Star, and removing some features. So, they didn’t go forward with it.

BTW, I think, since Chuck Thacker was working for Microsoft Research, the tablet he held up was a Tablet PC. I never used one of those (I worked with Telxons in the 1990s, sometimes running Windows), but I imagine it wasn’t locked down. So, running Squeak on it without restrictions on code would’ve worked when he talked about that.

Re. Apple and Squeak

I used to track this. Last I heard (many years ago), they allowed Squeak on the iPad, but the only way to share code was by uploading projects to a website, so that others could run the code through a web-emulated version of Squeak (ie. they were not allowed to download code to the iPad).

What I remember is Apple worried that Squeak could be used as a vector for security exploits, since you can access OS functions, and the file system from within Squeak.

Like so many commercial Linux distributions, I imagine apps. run as root on iOS, so all apps. have to be treated like “potential criminals.” As Alan Kay has said for years, the problem should be solved by designing a secure OS architecture, not looking at programming with such suspicion. It’s a good point. I’ve seen this with PCs in schools. School districts lock them down, and make it difficult for programming students to download new languages to them (because the language isn’t “certified” by the district). When I was in school, the 8-bit computers weren’t like this at all. We could run what we wanted on them, without fear of screwing up the system. A good part of that was the OS was in ROM, so it could not be altered by software. Another was we didn’t have hard disks on the school computers, and schools could block writes to their floppies, by simply covering the “write notch” with tape that was difficult to remove (though, this could be circumvented with scissors, but I never saw that). Administration was a lot simpler with this setup, and the risk was a lot lower. I’m not saying go back to that exact setup. I use it as an illustration that it is possible to secure systems without interfering with the ability of users to do programming, if the powers that be want it. Though, it is a matter of how the system is designed, and using currently available system designs may not support current needs. So, new ones would have to be researched.