I suppose the way I’d think of it is that C is an adequately low-level language to do almost all the work of the OS, but also an adequately high-level language to be productive and portable. It does seem that C can’t quite manage the full 100% - perhaps because it has no way to emit some small number of crucial instructions which are needed, other than inline assembly code.

I wonder if you would read a textbook, in this case. Perhaps what you’d draw on is practical knowledge, of using and perhaps reverse-engineering - or at least, deeply understanding - some existing OS on some existing hardware. Perhaps a minicomputer.

Famously, Linus Torvalds started by writing a task switcher. Which I think illustrates a very very important question: what do you expect your OS to do? It would be natural, I think, to start with the absolute minimum, and build up, but not build up any further than you felt the need.

One might, then, ask this: what OS functions does CP/M offer? Or the very first Unix? Or the very first Linux? I suspect ‘glorified program loader’ would cover a major part of it - and I don’t mean that in a demeaning way. If I can load and run a program, if that program can read and write files, it if can take input from the keyboard and output to the screen, that’s an operating system. For sure it’s handy to have things like multitasking, interprocess communication, memory protection, multiple users, abstraction layers for input and output and filing systems - but none of that is essential, just very handy.

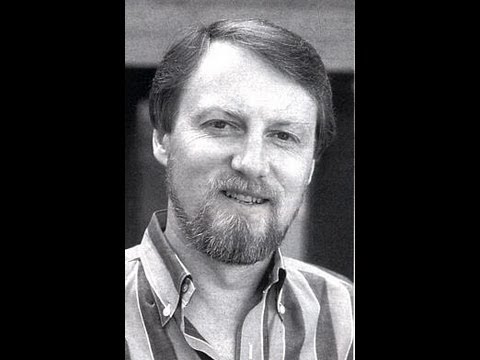

It’s a historical question - and an interesting one - as to how Kildall got the knowledge he needed. I don’t know the answer.

![The Man Who COULD Have Been Bill Gates [Gary Kildall]](https://img.youtube.com/vi/sDIK-C6dGks/maxresdefault.jpg)